New State Law Slaps $10,000 Fines on Anyone Using AI for Therapy

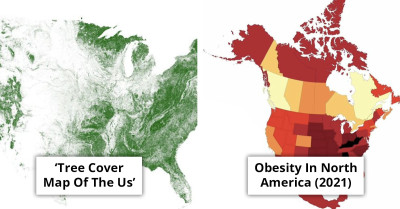

The legislation also separates wellness-focused apps from therapeutic AI services.

Illinois just dropped a legal brick on the heads of anyone using AI for mental health advice, and the fine is not small, up to $10,000. It is the first state to draw a hard line around AI emotional support, and it has everyone asking what counts as “therapy” when the screen talks back.

For a lot of people, this started the same way, with rising therapy costs and long waits for appointments. They turned to AI tools like ChatGPT for comfort, coping prompts, and “I get it” replies, even though the law is basically saying those vibes are not the same thing as real therapeutic care.

The WOPR Act is now forcing Illinois to separate wellness chat from therapeutic AI, and the fallout could spread fast.

Illinois has officially become the first U.S. state to ban artificial intelligence from providing mental health advice, introducing fines of up to $10,000 for anyone who violates the law.

That is why the WOPR Act does not just target AI apps in general, it targets AI used for mental health advice, with fines that can hit $10,000.

Growing Reliance on AI for Emotional Support

With therapy costs rising and access to mental health care often limited, more people have turned to AI-powered tools, including ChatGPT, for emotional support. These platforms are seen by some as a cheaper, more accessible alternative to traditional therapy.

Yet experts stress that AI cannot replicate the nuanced, empathetic understanding that human therapists provide.

The recent enactment of the WOPR Act in Illinois marks a pivotal moment in the intersection of technology and mental health care. As the first state to impose stringent restrictions on the use of artificial intelligence in therapeutic settings, Illinois is responding to growing concerns about the limitations of AI in providing mental health support. While there has been a surge in AI applications aimed at enhancing mental health services, these tools often fall short of delivering the nuanced care that human therapists provide. The law recognizes that AI may help in identifying patterns and offering resources, yet it fundamentally lacks the human touch that is critical for effective therapy. The essence of therapeutic relationships lies in trust and emotional connection, which are irreplaceable by algorithms. This legislative move reflects a cautious approach to the integration of technology in sensitive areas like mental health, prioritizing the well-being of individuals seeking support over the convenience that AI might offer.

The Legislation Also Separates Wellness-Focused Apps from Therapeutic AI Services.

Pexels

Pexels

Illinois’ Decision Could Become a Model for Other States Grappling with How to Balance Technological Innovation with Patient Safety.

Pexels

Pexels

Meanwhile, the same people who leaned on AI during pricey, hard-to-access emotional support are now staring at a law that treats those interactions differently.

This is similar to Uber passengers whose “small talk” turned into screenshot-worthy messages.

The tricky part is that Illinois is trying to draw a line between “wellness-focused” tools and anything that looks like therapeutic guidance, even when the wording sounds similar.

Illinois’ decision could become a model for other states grappling with how to balance technological innovation with patient safety, especially as AI continues to evolve and integrate into everyday life.

The recent enactment of the WOPR Act in Illinois highlights a critical stance on the use of artificial intelligence in mental health services. The law reflects a growing awareness of the ethical dilemmas that accompany AI in therapy. While AI technology can process vast amounts of data, it fundamentally lacks the capacity for the deep emotional connections that are essential for effective therapeutic relationships. This legislation reinforces the notion that technology should serve to enhance the therapeutic process rather than replace the vital human interactions that form the foundation of mental health care. The $10,000 fines for violations signal a strong commitment to preserving the integrity of therapy, ensuring that the human touch remains at the forefront of mental health practices.

If Illinois becomes the model other states copy, the next time someone asks an AI chatbot for help, the legal risk might follow them out of state.

Illinois' groundbreaking legislation banning the use of artificial intelligence in therapy underscores a critical response to the inherent limitations of technology in mental health care. The passage of the Wellness and Oversight for Psychological Resources Act, which imposes hefty fines for violations, reflects a growing recognition that genuine human connection in therapy is essential. The law aims to preserve the essential therapeutic relationship, emphasizing that while AI might provide some level of support, it fundamentally lacks the empathy and understanding that trained professionals offer. By prioritizing human interaction and professional oversight, Illinois is taking a significant step toward ensuring that mental health services remain rooted in personal engagement, a necessity for effective treatment.

The recent enactment of the WOPR Act in Illinois highlights a critical moment in the intersection of technology and mental health care. This decision aligns with longstanding psychological research that emphasizes the importance of personal connections in therapeutic settings. As artificial intelligence continues to develop, the necessity for regulations that prioritize patient safety and ethical standards becomes increasingly urgent. Illinois is setting a precedent that may influence how other states approach the integration of technology in mental health care, ensuring that ethical considerations remain at the forefront of mental health practices.

Nobody wants to pay $10,000 for a chat window that sounded like it cared.

For another family clash, read what a parent did after their 18-year-old refused to leave a $2/hour job.